The Ethics of AI: Are We Ready for Fully Autonomous Systems?

Artificial Intelligence (AI) is taking the quantum leap at nowhere else than at a good pace, and as we are approaching this actual reality in the advent of true autonomous systems, we are constrained to face the ethical consequences that ensue from it. From self-driving cars to automated decision-making formulas in the health sector, finance, and law enforcement, AI has already started invading the whole world. But is it really supposed to be the pass to turn decisions of massive criticality over to machines? Herein is a review of the ethical questions raised by the existence of an AI, tradeoffs between dangers and benefits associated with autonomy, and whether society is prepared for the budding future of intelligent machines.

There is unprecedented advancement in artificial intelligence; it’s coming closer to the reality of fully autonomous systems, meaning that inevitable ethical implications accompany this. From self-driving cars to decision-making algorithms in health care, finance, or law enforcement, AI has started affecting one’s world. But are we really ready to grant machines decision-making power over critical issues? This article examines the ethical questions raised by the presence of an AI, trade-offs between the dangers and benefits associated with autonomy, and whether society is ready for the future of intelligent machines.

1. Fully Autonomous AI Systems Defined

Full autonomy in AI systems is an axiom, although it seems worth being explored before plunging into the ethical issues. Such systems do just about everything for themselves without human-in-the-loop intervention, decision-making through monumental amounts of data and pre-fixed algorithms.

Examples of Fully Autonomous Systems

- Self-driving cars – vehicles that drive themselves on a highway without any human interaction to avoid obstacles and guide the car.

- AI healthcare diagnostic procedures – analysis of medical data that provides a diagnosis to diseases and determines treatment procedures.

- Autonomous weapons – one with military drones or robotic soldiers that takes a decision based on combat actions.

- AI-assisted financial trading – algorithms that buy and sell stocks according to prevalent market swings, executing trades in mere milliseconds.

- Customer service chatbots – AIs that can manage really complicated customer interaction.

These are examples of applications that show phenomenal promise, but leave many ethical questions on accountability, fairness, and control.

2. The Ethical Dilemmas of AI Autonomy

Who Is Responsible When AI Makes Mistakes?

Probably the most important ethical question involved has been accountability-for-as-or-an AI in some issue such as an accident involving a self-driving car or a possibly fatal error made using AI by a medical diagnosis system: Who then would be accountable, to the AI developer, the deploying company, or the user who relied on it? Unlike human decision-makers, AIs cannot be pressed into accountability in conventional legal terms.

Bias and Fairness in AI Decisions

AI systems won’t be doing a better job than the data fed into them. If the data set includes certain biases-whether pertaining to race, gender, or class-these in turn can very well be entrenched and amplified further by AI. Examples:

- Hiring AI, conditioned on biased recruitment data gets to favor certain demographics.

- Predictive policing algorithms mainly target a certain demographic.

- AI credit scoring that putspeople at a disadvantage based on scant or prejudiced financial data.

Privacy and Surveillance Issues

Gaunt of stage of autonomous AI systems capable of working on open-ended data infiltration and manipulation. Should such an interface be availabe to AI on confidential medical history, transaction-based financial records, or private communications? How does one stop this from happening?

AI in Warfare: Morality in Question

Autonomous weapons and AI technologies for the military pose profound ethical challenges. Just how, then, are we to be sure that when death and life are at stake, AI cannot be making the decision? The absence of human judgment in critical situations could lead to catastrophic consequences.

The Threat of Job Layoffs

AI does create chances of employment; however, it does threaten traditional forms of work. Manufacuring, customer service, and sometimes even creative jobs; all are being replaced with automation. Efficiency and then human employment: How do we blend efficiency and then human employment?

3.The Case for AI Autonomy

Benefits and Opportunities Without the ethics of fully autonomous systems, the arguments against them are weighty. AI could, if responsible in its development and cascades, bring unparalleled benefits to society.

Efficiency and Productiveness

Once created and regulated properly, AI can perform more than what a human being can do within a short period of time. It can perform repetitively a whole lot of things in different fields such as healthcare, logistics, and finance. A few examples of such systems includes:

- Detection of diseases prior to human doctors using AI-powered medical imaging

- Autonomous resource optimization and waste reduction in supply chains

- AI financial advisors can help people make better investment decisions.

Reducing Human Error

Humans make mistakes due to many reasons, some are fatigue, bias, or lack of adequate information. Proper design AI can take data-based, objective decisions rather than based on emotions.

Improving Safety in High-Risk Industries

AI autonomously can save lives in dangerous environments. Examples:

- Autonomous robots can do risky work like mining, deep-sea exploration, and space travel.

- AI in aviation reduces the chances of a human error in piloting.

- AI-assisted surgeries increase the precision and decreased the complications of surgeries.

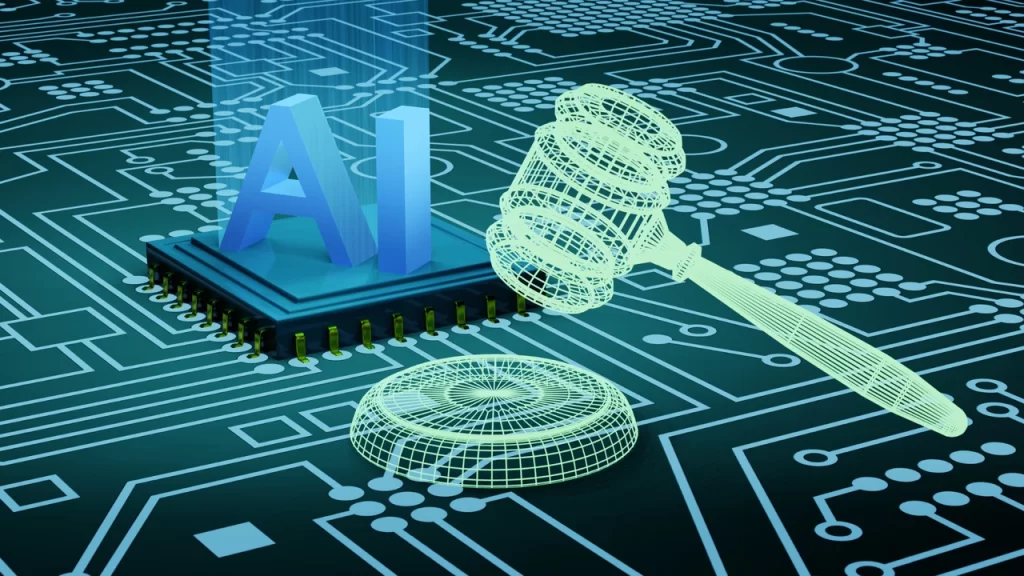

4. Are We Ready? The Need for AI Regulations and Ethical Guidelines

All the above points for autonomy would turn from a boon to a bane unless very good ethical frameworks and regulations are put in place.

Developing AI Ethics Standards

Countries and companies should formulate ethical standards for the development and deployment of AI. Such expectations would include some important principles such as:

- Transparency – AI systems should be explainable and their decision-making processes understandable.

- Accountability – There should be a clear attribution of responsibility for the actions.

- Fairness – diverse and unbiased data should be used for training AI to bring about fair outcomes.

- Privacy Protection – There should be safeguards on the usage of data to prevent misuse.

Setting up Databases for AI Governance and Supervision

International bodies such as the United Nations, along with newly formed regulatory agencies engaged in AI, should come together to cooperate in building global frameworks of governance for AI. Ethical audits and compliance checks should be applicable to companies developing AI.

Public Awareness and Education

Educate people in general about AI with all its ethical implications. These dimensions of awareness-so that they know what AI is capable of and what it can endanger-allow people to take informed decisions and stand for responsible development.

5. The Future of AI: A Balanced Approach

AI autonomy is inevitable, but must be approached with caution. Instead of fearing AI, we must focus on responsible innovation which prioritizes human well-being.

Humans and AI Together

AI should not replace humans; rather, it must be built to cooperate with humans. Human involvement is essential in the critical decision-making domain to assure ethical outcomes.

Ethical AI by Design

While it is recommended to consider ethics before creating an AI-compatible system, some designers nevertheless follow the ethics checklist after immersing themselves in the project. The essence of ethical AI is beyond doing no harm-it implies doing social good.

Reform of Existing Laws and Policies

The law must adapt to the societal impacts of AI, specifically considering issues of liability, data protection, and ethical usage in different sectors. The new regulations should address these matters.

Final Reflections

Are we ready for autonomous AI systems? The answer can be construed as yes and no. AI can change the way we have done business so far; however, first, we have to deal with ethical challenges that AI brings. The better we make AI, through transparency, accountability, and fairness, the more autonomy will enhance human life instead of putting it at risk.

The key to a future with ethical AI is balance: harnessing the strengths of AI while keeping a watchful human eye and ethical integrity. If we walk this fine line, AI can be a positive force, creating a smarter, safer, and more equitable world.

English

English